TGVP Report - AI Agent Infrastructure in 2026

AI Agent Infrastructure in 2026

Written by By Vignesh Radhakrishnan (Kellogg MBA '26)

Why the Bottleneck Isn't Models

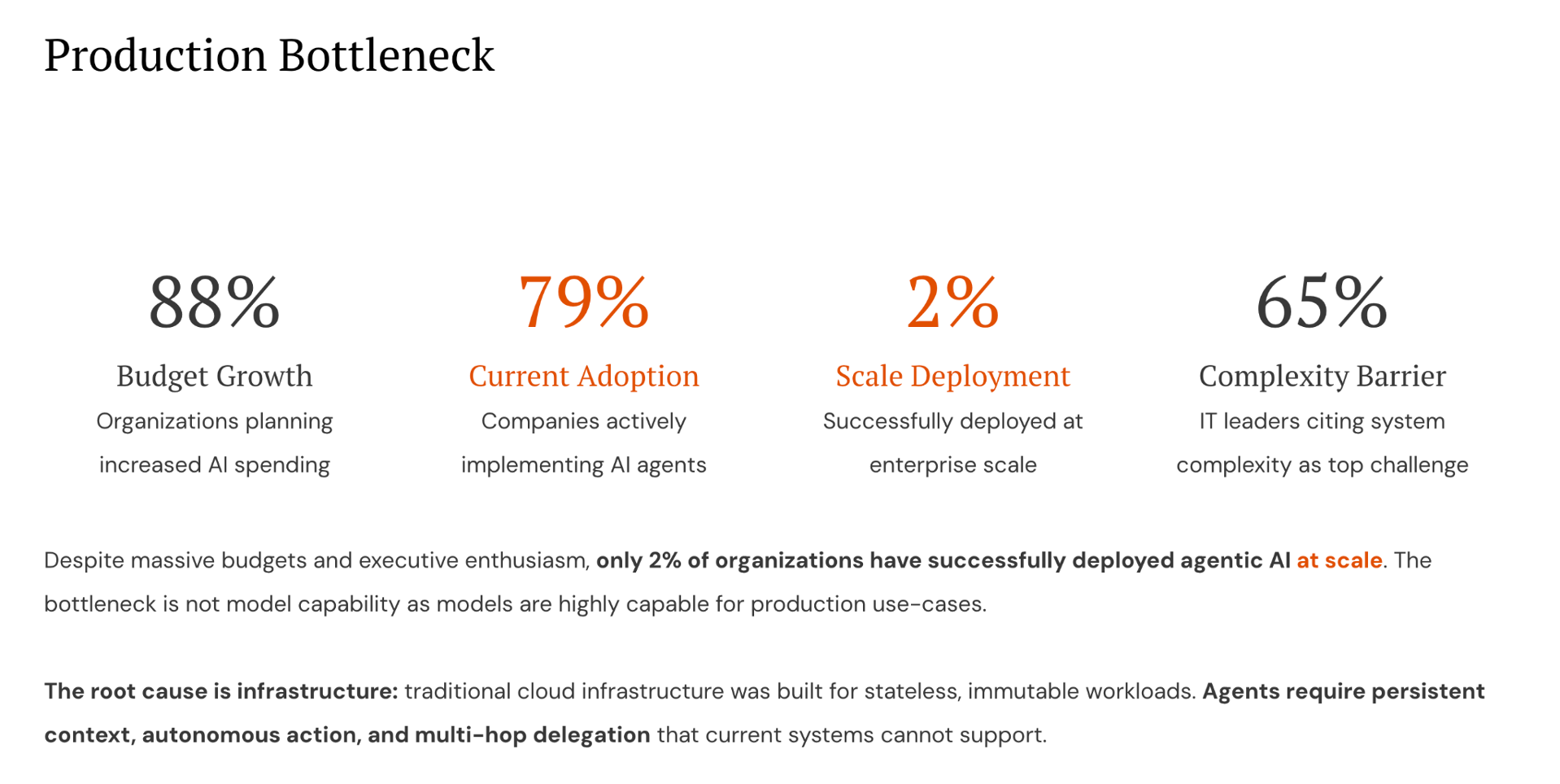

2026 has been a pivotal year for AI agents. As enterprises move from LLM wrappers to autonomous workflows, the sector has attracted unprecedented attention. PwC reports that 79% of companies are actively adopting AI agents, with 88% planning to increase AI budgets. Yet only 2% have deployed agents at scale.

There's a problem hiding behind the hype.

The gap between adoption and production has little to do with model quality. Models are good enough. The bottleneck is infrastructure.

In this review, we outline a four-layer architecture that agents require and traditional cloud infrastructure lacks, followed by three investment signals drawn from our conversations with 100+ founders across AI infrastructure, application, and security verticals.

Four Layers for Agent Architecture

Traditional cloud infrastructure was built for stateless, immutable workloads. A request comes in, gets processed, goes out. Agents break this model.

Agents maintain persistent context across sessions. They take actions with consequences. They delegate to other agents in multi-hop chains. They need authorization, auditing, and governance at every step. The primitives that power modern cloud computing (containers, serverless functions, session-based authentication) were not designed for this. Enterprises feel it. KPMG found that 65% of IT leaders cite system complexity as their top barrier.

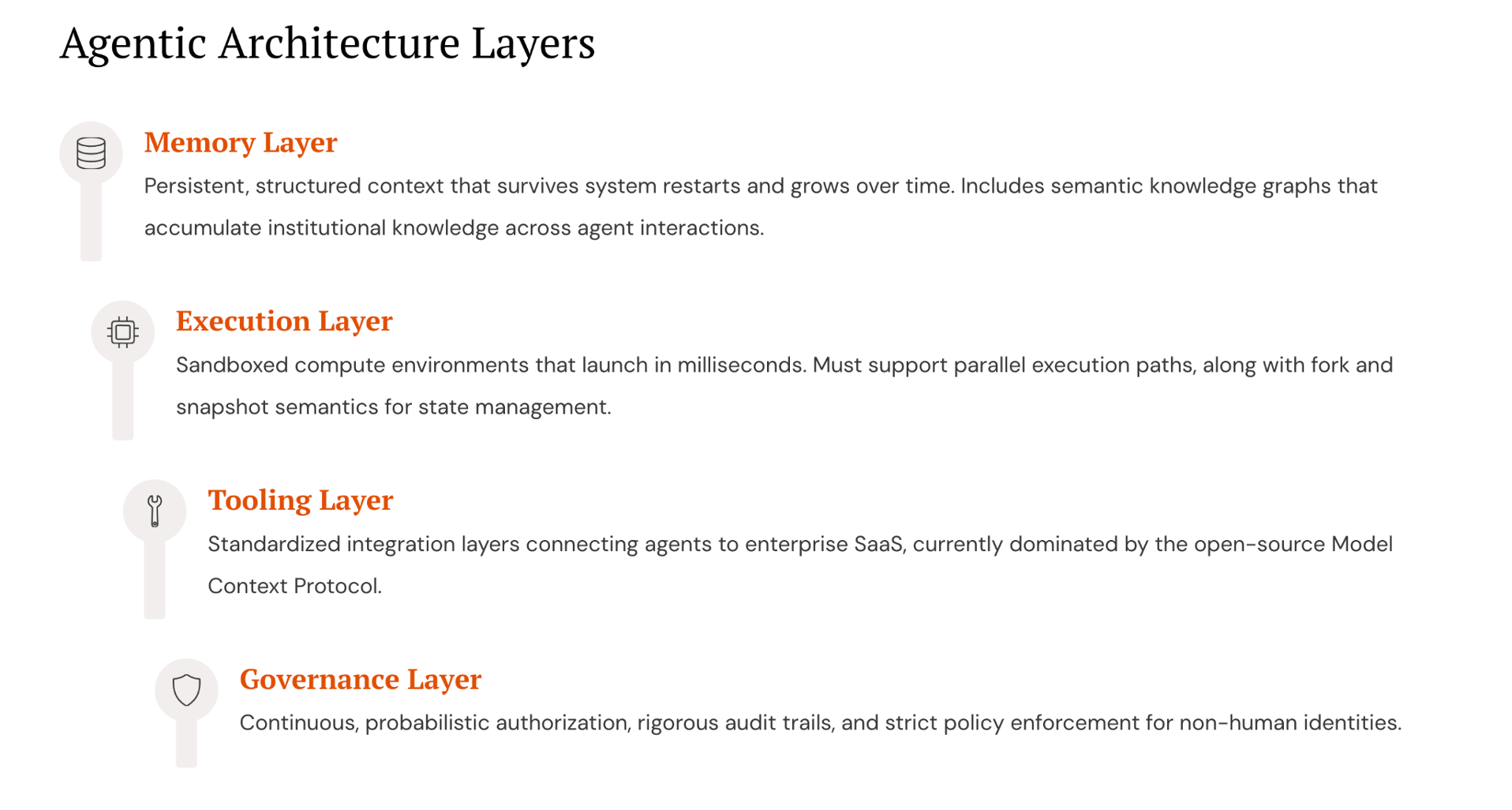

What agents need is a new stack with four layers:

- Memory: Persistent, structured context that survives system restarts and grows over time. This includes semantic knowledge graphs that accumulate institutional knowledge across agent interactions.

- Execution: Sandboxed compute environments that launch in milliseconds. These must support parallel execution paths, along with fork and snapshot semantics for state management.

- Tooling: Standardized integration layers connecting agents to enterprise SaaS. The Model Context Protocol is emerging as the dominant standard, with 97M+ monthly SDK downloads and backing from OpenAI, Google, and Microsoft.

- Governance: Continuous, probabilistic authorization with rigorous audit trails and strict policy enforcement for non-human identities.

Companies like Letta (from the Berkeley Sky Computing Lab that produced Databricks and Anyscale) are building the memory layer. Daytona is tackling execution with sandboxed development environments designed specifically for agent workloads. MCP is rapidly becoming the standard integration protocol, though the managed infrastructure above it remains an open opportunity. Startups like Astrix Security are building governance platforms to secure non-human identities where traditional IAM falls short.

Three signals from our diligence shaped where we see durable value in this stack.

Signal One: Stateful Services Make The Moat

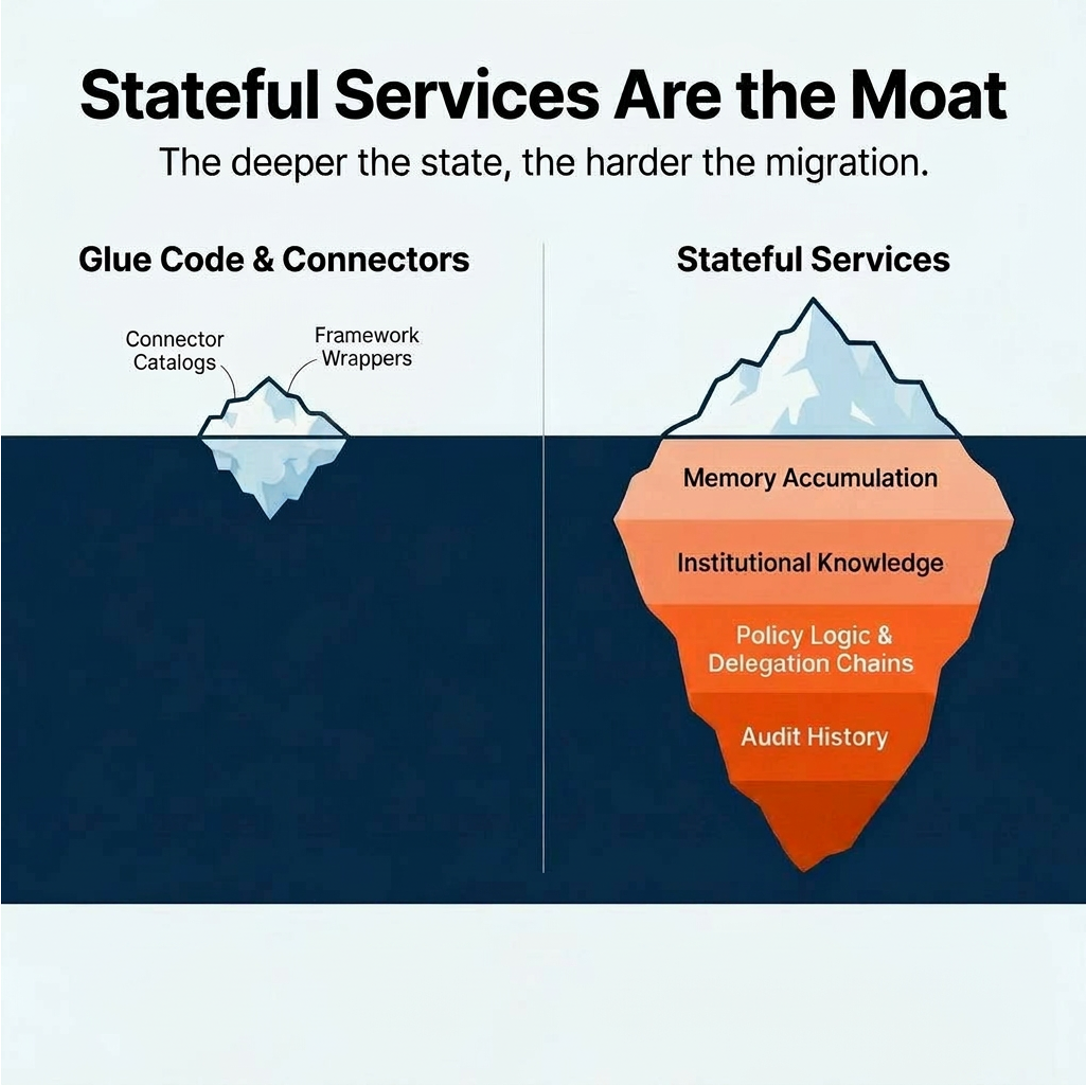

Not all agent infrastructure is created equal. There is an important distinction between stateful services and glue code.

Connector catalogs and framework wrappers are vulnerable to commoditization. They don't get more valuable the longer a customer uses them. Stateful services are different. The longer an agent runs on a memory platform, the more institutional knowledge accumulates. Migrating away means starting over entirely. The longer an enterprise runs agent authorization through a governance platform, the more policy logic, delegation chains, and audit history become embedded.

A hardware tailwind reinforces this dynamic. GPU utilization is increasingly bottlenecked by memory bandwidth, and DRAM pricing is projected to rise. As memory becomes the binding constraint across the AI stack, platforms that efficiently manage persistent agent state gain a structural cost advantage. The economics of memory make statefulness more valuable, not less, over time.

Signal Two: Data Concentration Meets Model Fragmentation

Enterprise data tends to concentrate in a single cloud. An enterprise with its data lake in S3 will run agents close to that data, on AWS. This concentration is a structural reality of cloud economics: egress costs, latency, compliance.

Model usage, however, is fragmented. An enterprise might use Claude for coding, GPT for reasoning, Gemini for multimodal processing, and open-source models for cost-sensitive workloads. No single model dominates across all capabilities, and enterprises actively diversify to avoid lock-in. Gartner reported a 1,445% surge in multi-agent system inquiries from Q1 2024 to Q2 2025.

This creates a structural gap. Bedrock, Azure AI, and Vertex each work best within their own ecosystem. Each cloud provider's agent infrastructure naturally favors its own model ecosystem. But as agent fleets grow across model providers, the need for infrastructure that works across all of them becomes unavoidable.

The startup opportunity is the layer that runs inside the customer's cloud, respecting where data lives, while serving agents regardless of which model orchestrates them. For instance, Datadog won by deploying inside AWS but monitoring workloads across every cloud. The agent infrastructure equivalent: deploy in the customer's environment for data proximity, provide memory, tooling, and governance that work with several proprietary and open-source models simultaneously.

First-class support across competing model ecosystems is unlikely to come from any single cloud provider. That gap is structural.

Signal Three: Governance Is the Biggest Need

This is where our diligence work provided the strongest signal.

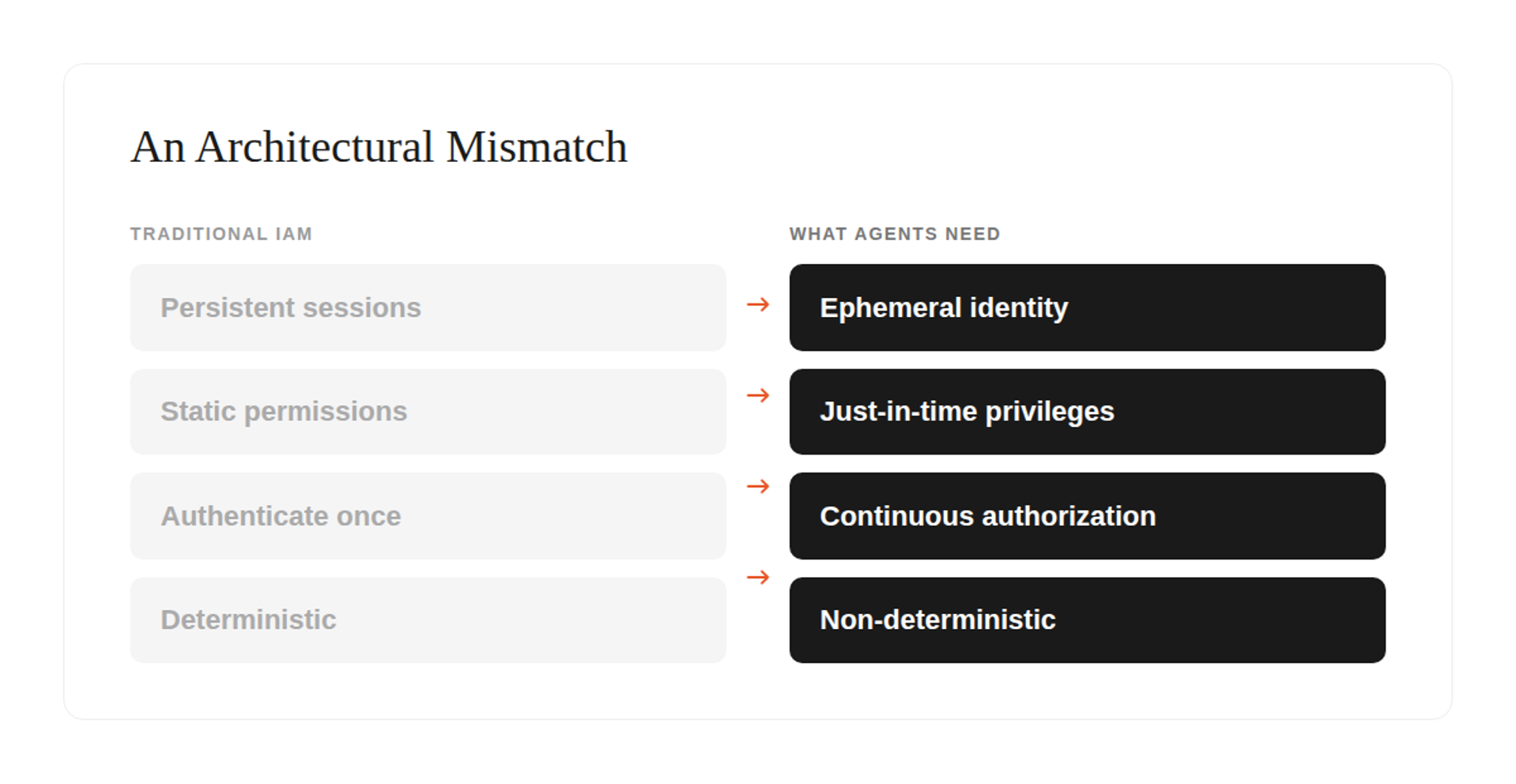

Traditional identity and authorization systems were built for a world where permissions are static and actions are deterministic. Agents break both assumptions. Agent identities are ephemeral, spun up and down dynamically, and non-deterministic. The same agent behaves differently each time. Authorization must be computed at runtime, because you cannot predict what an agent will do at the outset.

Traditional session-token architectures were designed for 'authenticate once, get access for a fixed period.' Agents need just-in-time privileges, zero standing permissions, and authorization that adapts mid-task. It's an architectural mismatch, not a feature gap.

One signal that validated this thesis came from an interesting direction: payments. Mastercard launched Agent Pay in April 2025, introducing "Agentic Tokens" for autonomous transactions. Visa introduced the Trusted Agent Protocol with 10+ partners including Microsoft, Shopify, and Stripe. When the world's two largest payment networks are building agent identity infrastructure, the governance thesis is validated at the highest possible level.

In our diligence, we spoke with a VP of Engineering at a $2B+ enterprise who described how his team evaluated leading IAM providers and found them architecturally mismatched for agent use cases. His company has invested $2B in agent capabilities and is targeting 20-30 production agents by 2026. The pattern repeated across multiple conversations: enterprises are rejecting incumbent IAM for agent workloads.

The urgency is reflected on the capital side as well. In a reference call, an active investor in the space described authorization moving from a moderate priority to near the top of their investment criteria with the rise of agents, noting that the market remains very early with the biggest competitor being internal builds.

Gartner predicts AI governance spending will reach $492M in 2026 and surpass $1B by 2030. "Guardian Agent" technologies are expected to capture 10-15% of the total agentic AI market by 2030. And there's a compounding dynamic at play: better frontier models directly increase the urgency for governance. Highly capable, autonomous models can cause significantly more damage without proper, deterministic guardrails.

A Common Conundrum: Hyperscalers vs. Startups

Every major cloud provider has entered the agent infrastructure space. AWS launched Bedrock AgentCore with managed memory, gateway, identity, runtime, observability, and policy enforcement. Microsoft shipped long-term memory in Foundry Agent Service. Google is rolling MCP support across Vertex AI.

These moves validate the four-layer stack. They confirm market demand. They also represent competitive risk.

However, platform incentives work against depth. Hyperscalers balance dozens of services competing for engineering resources, optimizing for breadth of coverage. Startups concentrate all resources on a single problem, optimizing for depth. This is not a criticism; it's how platform economics work. The historical pattern strongly favors startups winning alongside platform providers in the layers where depth matters.

AWS launched CloudWatch in 2009; Datadog went public in 2019 at $10B+ and now generates $2.7B in annual revenue. RDS launched in 2009; MongoDB went public in 2017 with a $15B+ market cap. Redshift launched in 2013; Snowflake went public in 2020 at the largest software IPO in history.

Each time, the cloud-native default worked for basic workloads and purpose-built startups went deeper on the workloads enterprises cared most about. The same dynamic is playing out in agent infrastructure.

A Decision Framework

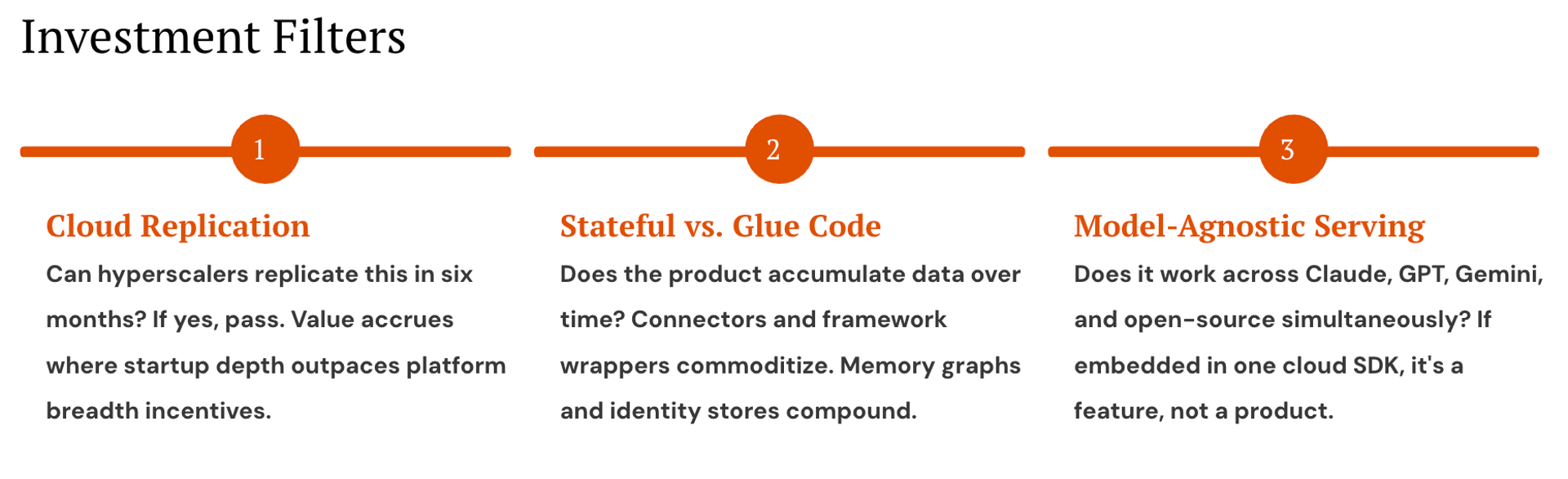

These structural signals translate into three investment filters we apply when evaluating agent infrastructure startups:

Cloud Replication: Can hyperscalers replicate this in six months? We evaluate whether a startup's core value comes from architectural depth or surface-level integration. If the product requires years of accumulated usage data, domain-specific policy logic, or deep model-agnostic optimization to function well, the replication window extends far beyond what a platform team with competing priorities will invest in. Value accrues only where startup depth outpaces platform breadth incentives.

Stateful vs. Glue Code: Does the product accumulate proprietary data over time? We draw a hard line between services that get more valuable with usage and those that don't. Memory graphs and identity stores compound. Every interaction adds context. Every policy decision refines the authorization model. Every audit event deepens the compliance record. Connector catalogs and framework wrappers do the opposite. They can be replaced easily. The test is simple. Would migrating away from this product mean starting over?

Model-Agnostic Serving: Does it work across multiple proprietary and open-source models simultaneously? As enterprises diversify across proprietary and open-source models, infrastructure locked to a single provider's SDK becomes a ceiling, not a foundation. We look for startups that deploy inside the customer's cloud for data proximity while serving agents regardless of which model orchestrates them. If the product only works within one ecosystem, it's a feature, not a product.

Concluding Thoughts

Taken together, these dynamics point to a clear investment thesis. The agent infrastructure market is growing at 44-46% CAGR. But the investable surface is narrower than the hype suggests.

The opportunity is concentrated in three areas: stateful services with accumulating switching costs, model-agnostic middleware that bridges fragmented model usage, and governance infrastructure that addresses the trust gap between what agents can do and what enterprises will let them do.

The question is no longer whether enterprises will deploy AI agents. It's whether the infrastructure exists to let them. The winners will be the companies that treat agent infrastructure not as a feature bolted onto existing platforms, but as a new category built from first principles, designed for persistent context, autonomous action, and continuous authorization.

The current bottleneck is infrastructure, not models. The companies that solve it will define the next era of enterprise software.

.svg)